Overview

Prime Video, Amazon's video streaming service, has explained how it re-architected the audio/video quality inspection solution to reduce operational costs and address scalability problems. It moved the workload to EC2 and ECS compute services, and achieved a 90% reduction in operational costs as a result.

The Video Quality Analysis (VQA) team at Prime Video created the original tool for inspecting the quality of audio/video streams that was able to detect various user experience quality issues and trigger appropriate repair actions.

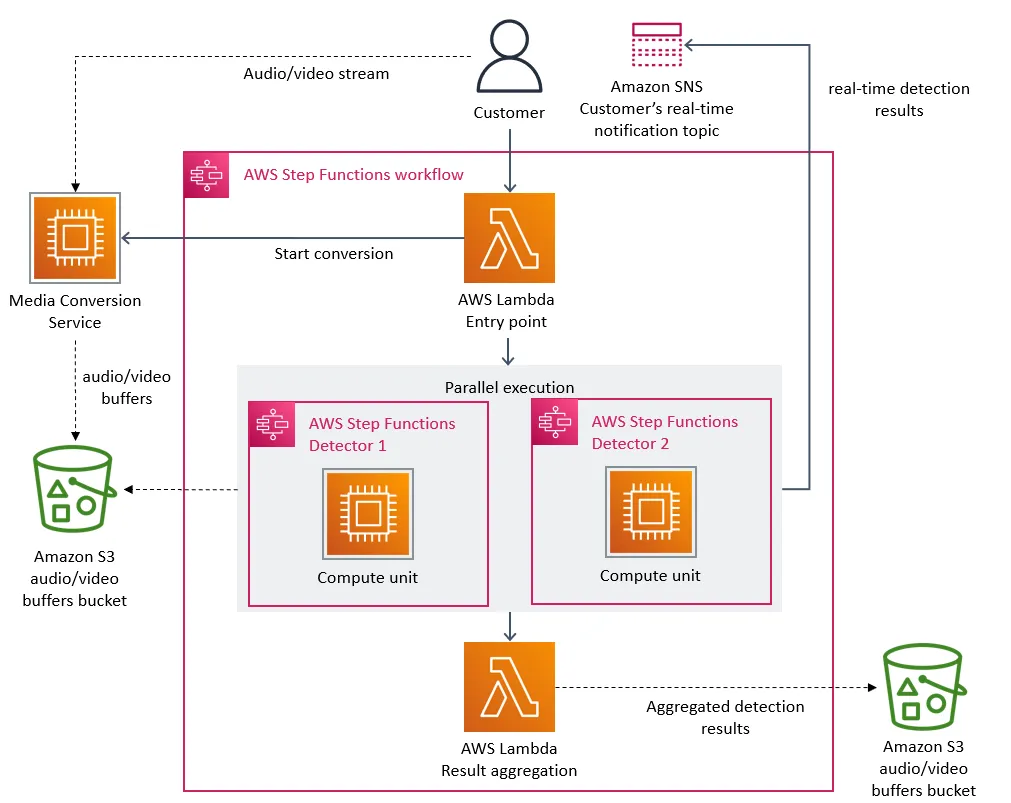

The initial architecture of the solution was based on microservices responsible for executing steps of the overall analysis process, implemented on top of the serverless infrastructure stack. The microservices included splitting audio/video streams into video frames or decrypted audio buffers as well as detecting various stream defects by analyzing frames and audio buffers using machine-learning algorithms. AWS step functions were used as a primary process orchestration mechanism, coordinating the execution of several lambda functions. All audio/video data, including intermediate work items, were stored in AWS S3 buckets and an AWS SNS topic was used to deliver analysis results.

The team designed the distributed architecture to allow for horizontal scalability and leveraged serverless computing and storage to achieve faster implementation timelines. After operating the solution for a while, they started running into problems as the architecture has proven to only support around 5% of the expected load.

Marcin Kolny, senior software engineer at Prime Video, shares his team's assessment of the original architecture:

While onboarding more streams to the service, we noticed that running the infrastructure at a high scale was very expensive. We also noticed scaling bottlenecks that prevented us from monitoring thousands of streams. So, we took a step back and revisited the architecture of the existing service, focusing on the cost and scaling bottlenecks.

The problem of high operational cost was caused by a high volume of read/writes to the S3 bucket storing intermediate work items (video frames and audio buffers) and a large number of step function state transitions.

The other challenge was due to reaching the account limit of the overall number of state transitions, because the orchestration of the process involved several state transitions for each second of the analyzed audio/video stream.

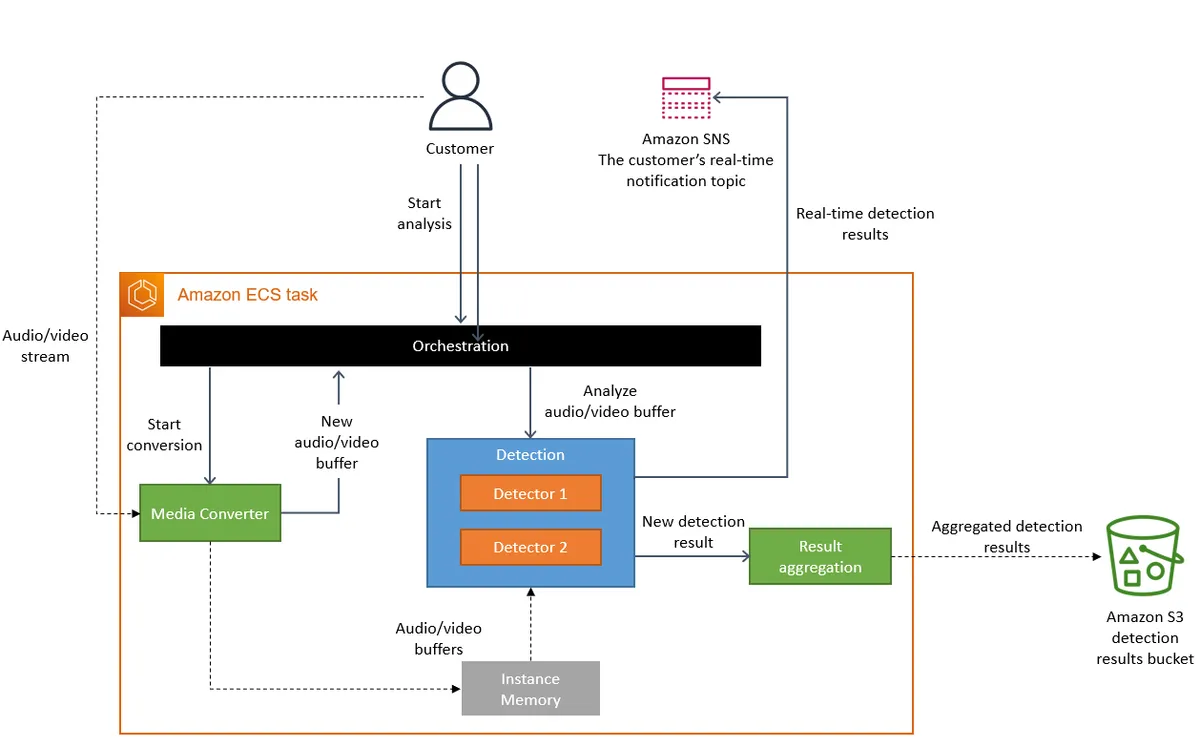

In the end, the team decided to consolidate all of the business logic in a single application process. Kolny summarizes the revised design:

We realized that distributed approach wasn't bringing a lot of benefits in our specific use case, so we packed all of the components into a single process. This eliminated the need for the S3 bucket as the intermediate storage for video frames because our data transfer now happens in the memory. We also implemented orchestration that controls components within a single instance.

The resulting architecture had the entire stream analysis process running in ECS on EC2, with groups of detector instances distributed across different ECS tasks to avoid hitting vertical scaling limits when adding new detector types.

After rolling out the revised architecture, the Prime Video team was able to massively reduce costs (by 90%) but also ensure future cost savings by leveraging EC2 cost savings plans. Changes to the architecture have also addressed unforeseen scalability limitations that prevented the solution from handling all streams viewed by customers.